mirror of

https://github.com/khoaliber/khoj.git

synced 2026-04-28 00:19:25 +00:00

e9e49ea098aa45999736b7cc5499e571f5cb2696

* Add support for custom inference endpoints for the cross encoder model - Since there's not a good out of the box solution, I've deployed a custom model/handler via huggingface to support this use case. * Use langchain.community for pdf, openai chat modules * Add an explicit stipulation that the api endpoint for crossencoder inference should be for huggingface for now

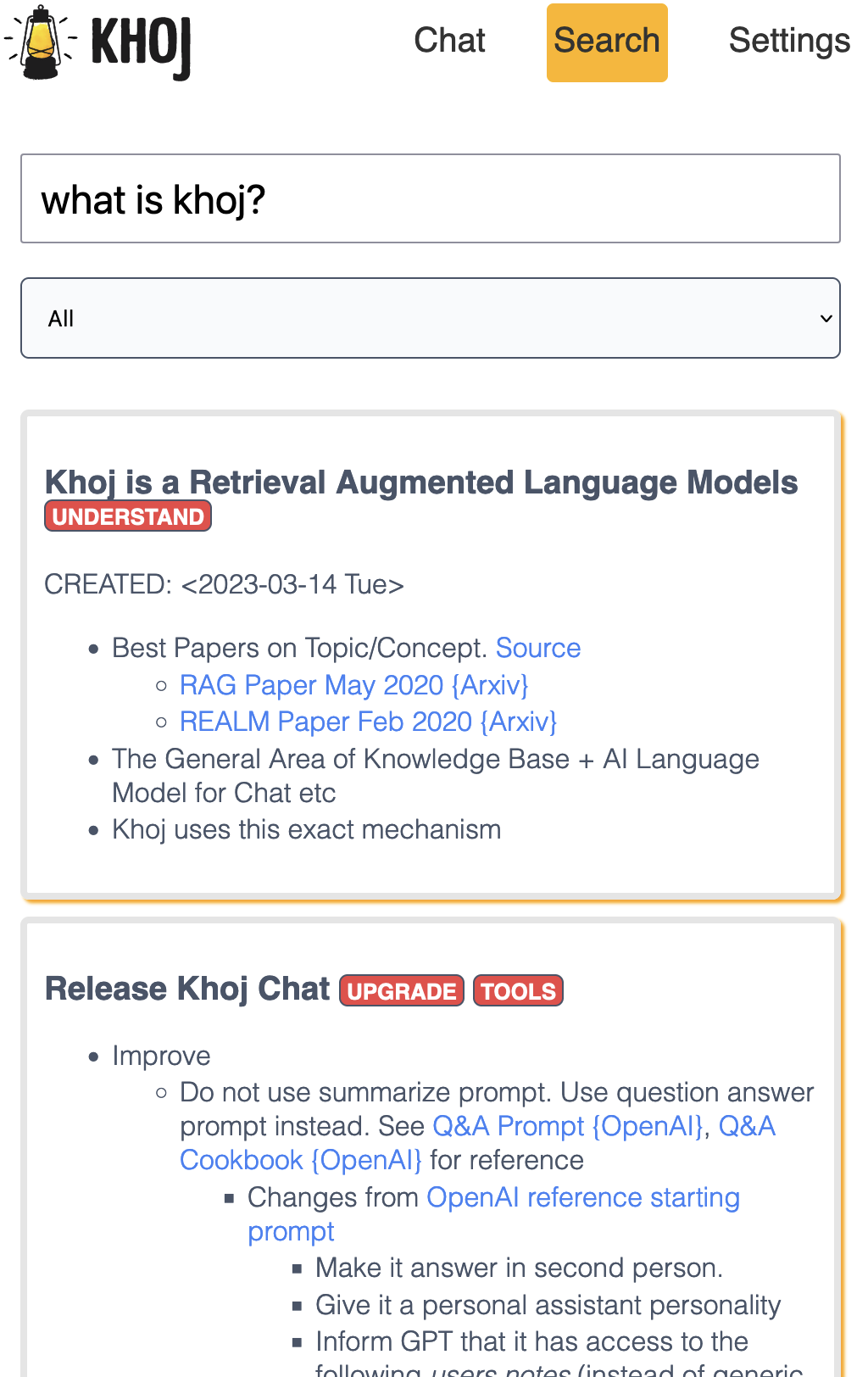

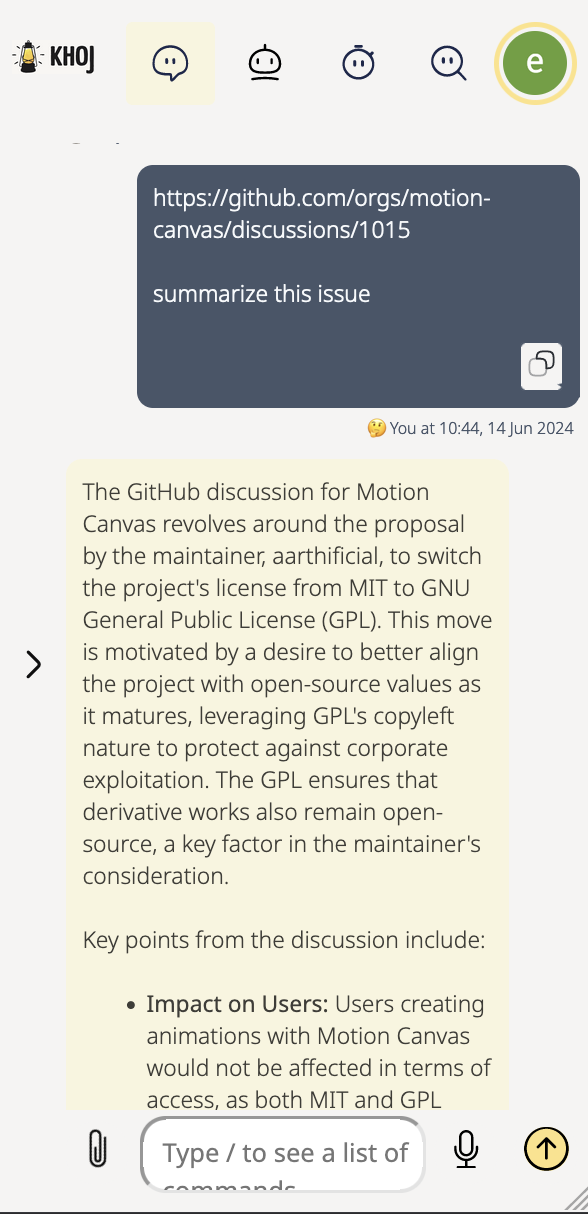

An AI personal assistant for your digital brain

Khoj is a web application to search and chat with your notes, documents and images.

It is an offline-first, open source AI personal assistant accessible from your Emacs, Obsidian or Web browser.

It works with jpeg, markdown, notion, org-mode, pdf files and github repositories.

Contributors

Cheers to our awesome contributors! 🎉

Made with contrib.rocks.

Languages

Python

51%

TypeScript

36.1%

CSS

4.1%

HTML

3.2%

Emacs Lisp

2.4%

Other

3.1%