mirror of

https://github.com/khoaliber/khoj.git

synced 2026-03-02 21:19:12 +00:00

3c3e48b18c87a42e3b69af5dbfb86b5c8b68effa

## Benefits - Support all GGUF format chat models - Support more GPUs like AMD, Nvidia, Mac, Vulcan (previously just Vulcan, Mac) - Support more capabilities like larger context window, schema enforcement, speculative decoding etc. ## Changes ### Major - Use llama.cpp for offline chat models - Support larger context window - Automatically apply appropriate chat template. So offline chat models not using llama2 format are now supported - Use better default offline chat model, NousResearch/Hermes-2-Pro-Mistral-7B - Enable extract queries actor to improve notes search with offline chat - Update documentation to use llama.cpp for offline chat in Khoj ### Minor - Migrate to use NouseResearch's Hermes-2-Pro 7B as default offline chat model in khoj.yml - Rename GPT4AllChatProcessor to OfflineChatProcessor Config, Model - Only add location to image prompt generator when location known

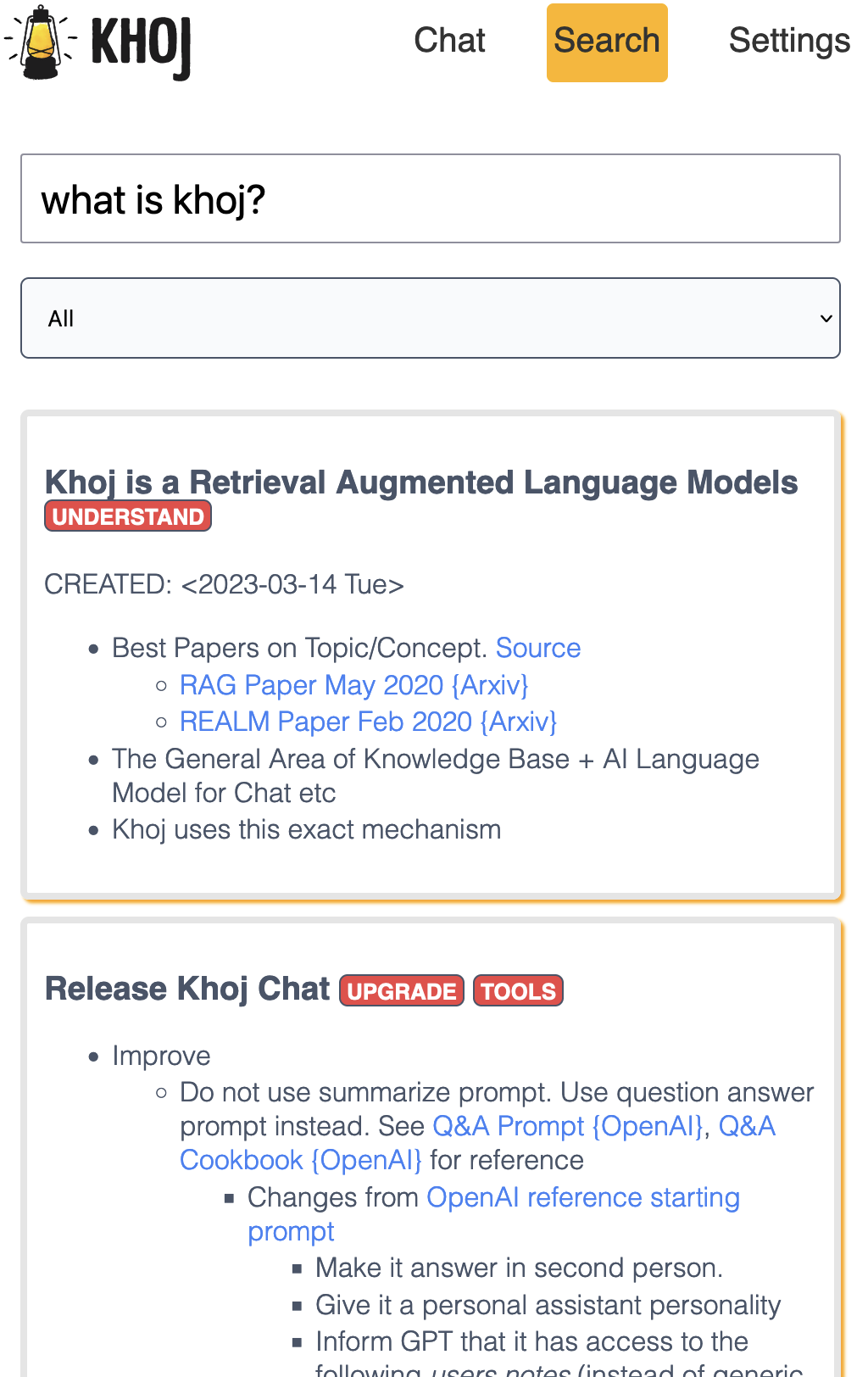

An AI personal assistant for your digital brain

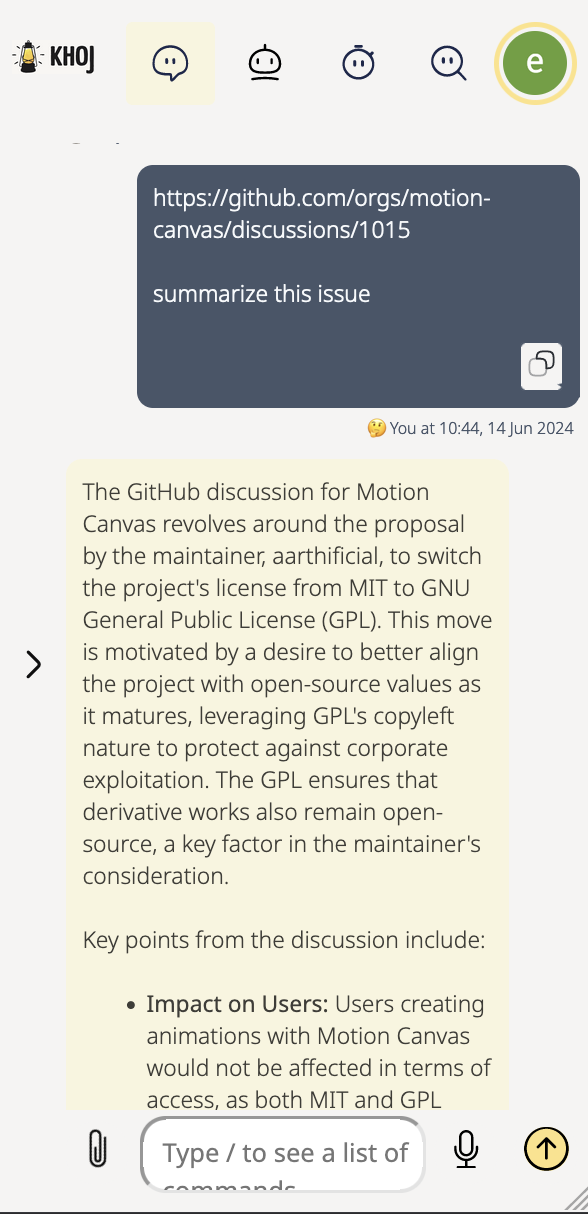

Khoj is an AI application to search and chat with your notes and documents.

It is open-source, self-hostable and accessible on Desktop, Emacs, Obsidian, Web and Whatsapp.

It works with pdf, markdown, org-mode, notion files and github repositories.

It can paint, search the internet and understand speech.

Contributors

Cheers to our awesome contributors! 🎉

Made with contrib.rocks.

Languages

Python

51%

TypeScript

36.1%

CSS

4.1%

HTML

3.2%

Emacs Lisp

2.4%

Other

3.1%